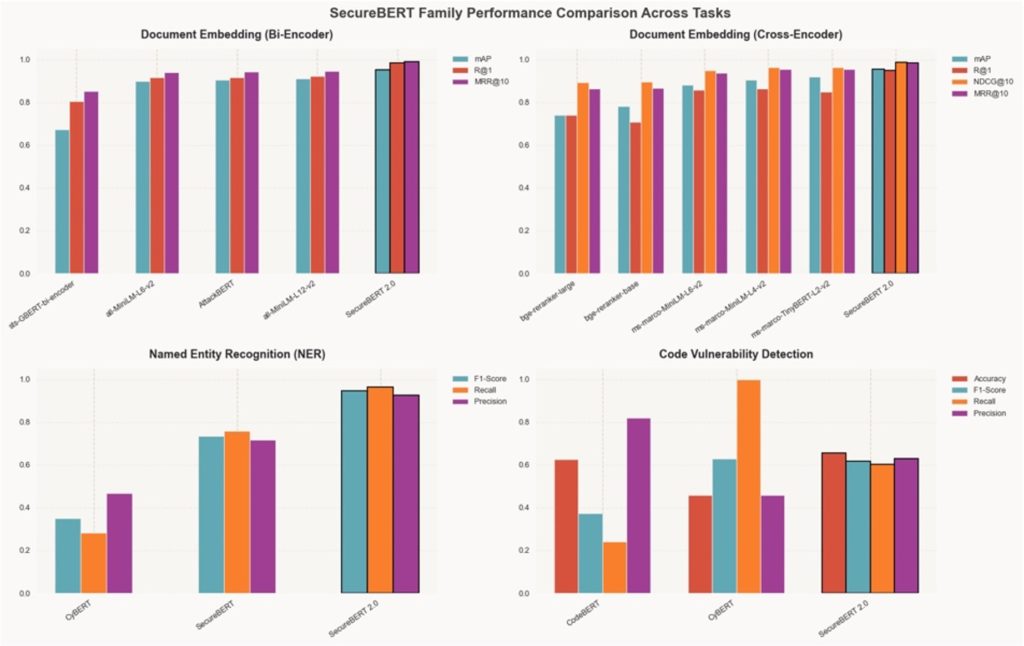

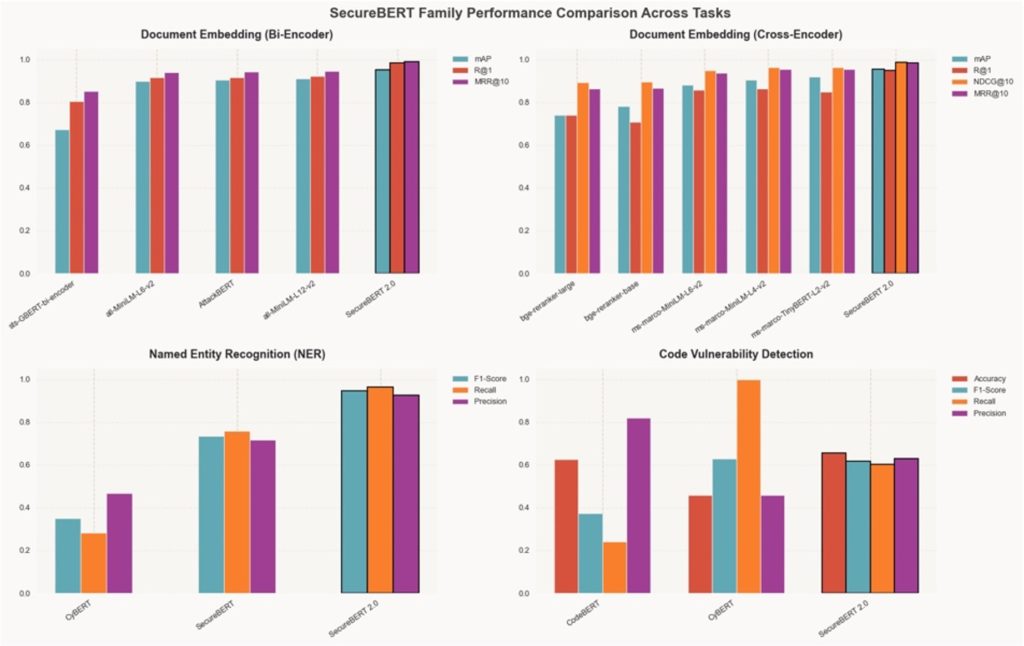

Right this moment, we’re excited to share that the SecureBERT 2.0 mannequin is offered on HuggingFace and GitHub with an accompanying analysis paper. This launch marks a major milestone, constructing on the already extensively adopted SecureBERT mannequin to unlock much more superior cybersecurity purposes. Simply see this unparalleled efficiency throughout real-world duties:

In 2022, the primary SecureBERT mannequin was launched by Ehsan and a workforce of researchers from Carnegie Mellon College and UNC Charlotte as a pioneering language mannequin designed particularly for the cybersecurity area. It bridged the hole between general-purpose NLP fashions like BERT and the specialised wants of cybersecurity professionals—enabling AI programs to perceive the technical language of threats, vulnerabilities, and exploits.

By December 2023, SecureBERT ranked among the many high 100 most downloaded fashions on HuggingFace out of the roughly 500,000 fashions then obtainable on the repository. It gained vital recognition throughout the cybersecurity group and stays in energetic use by main organizations, together with the MITRE Risk Report ATT&CK Mapper (TRAM) and CyberPeace Institute.

On this weblog, we’ll mirror on the impression of the unique SecureBERT mannequin, element the numerous developments made in SecureBERT 2.0, and discover some real-world purposes of this highly effective new mannequin.

The impression of the unique SecureBERT mannequin

Safety analysts at enterprises and businesses commit an incredible period of time to parsing by numerous safety alerts to establish, analyze, categorize, and report on potential threats. It’s an essential course of that, when completed completely manually, is time-consuming, costly, and vulnerable to human error.

SecureBERT gave researchers and analysts a instrument that might course of safety reviews, malware analyses, and vulnerability write-ups with contextual accuracy by no means earlier than doable. Even in the present day, it serves as a useful instrument for cybersecurity specialists at a number of the world’s high businesses, universities, and labs.

Nonetheless, SecureBERT had a number of limitations. It struggled to deal with long-context inputs comparable to detailed menace intelligence reviews and mixed-format knowledge combining textual content and code. Since SecureBERT was educated on RoBERTa-base, a traditional BERT encoder with a 512-token context restrict and no FlashAttention, it was slower and extra memory-intensive throughout coaching and inference. In distinction, SecureBERT 2.0, constructed on ModernBERT, advantages from an optimized structure with prolonged context, sooner throughput, decrease latency, and lowered reminiscence utilization.

With SecureBERT 2.0, we addressed these gaps in coaching knowledge and superior the structure to ship a mannequin that was much more succesful and contextually conscious than ever. Whereas the unique SecureBERT was a standalone base mannequin, the two.0 model consists of a number of fine-tuned variants specializing in numerous real-world cybersecurity purposes.

Introducing SecureBERT 2.0

SecureBERT 2.0 brings larger contextual relevance and area experience for cybersecurity, understanding code sources and programming logic in a approach its predecessor merely couldn’t. The important thing here’s a coaching dataset that’s bigger, extra numerous, and strategically curated to assist the mannequin higher seize delicate safety nuances and ship extra correct, dependable, and context-aware menace evaluation.

Whereas giant autoregressive fashions comparable to GPT-5 excel at producing language, encoder-based fashions like SecureBERT 2.0 are designed to grasp, symbolize, and retrieve info with precision—a elementary want in cybersecurity. Generative fashions predict the subsequent token; encoder fashions remodel complete inputs into dense, semantically wealthy embeddings that seize relationships, context, and which means with out fabricating content material.

This distinction makes SecureBERT 2.0 splendid for high-precision, security-critical purposes the place factual accuracy, explainability, and velocity are paramount. Constructed on the ModernBERT structure, it makes use of hierarchical long-context encoding and multi-modal text-and-code understanding to investigate complicated menace knowledge and supply code effectively.

Let’s check out how SecureBERT 2.0 helps safety analysts in real-world purposes.

Actual world purposes of SecureBERT 2.0

Think about you’re a SOC analyst tasked with investigating a suspected provide chain compromise. Historically, this may contain correlating open-source intelligence, inner alerts, and vulnerability reviews in a course of which might take a number of weeks of guide knowledge evaluation and cross-referencing.

With SecureBERT 2.0, you may merely embed all related belongings—reviews, codes, CVE knowledge, and menace intelligence, for instance—within the system. The mannequin instantly surfaces connections between obscure indicators and beforehand unseen infrastructure patterns.

This is only one potential situation of many; SecureBERT 2.0 can assist and streamline a wealth of potential safety purposes:

- Risk Intelligence Correlation: Linking indicators of compromise throughout a number of sources to uncover marketing campaign patterns and adversary ways

- Incident Triage & Alert Prioritization: Embedding alerts and reviews to detect duplicates, associated incidents, or identified CVEs—lowering noise and analyst workload

- Safe Code & Vulnerability Detection: Figuring out dangerous patterns, insecure dependencies, and potential zero-day vulnerabilities in supply code

- Semantic Search & RAG for Safety Ops: Offering context-aware retrieval throughout inner data bases, menace feeds, and documentation for sooner analyst response

- Coverage and Compliance Search: Enabling correct semantic lookup throughout giant regulatory and governance corpora

Not like generative LLMs that create textual content, SecureBERT 2.0 interprets and buildings info to ship sooner inference, decrease compute prices, and reduce the chance of hallucination. This makes it a trusted basis mannequin for enterprise, protection, and analysis environments the place precision and knowledge integrity matter most.

Below the hood of SecureBERT 2.0

There are three elements to the SecureBERT 2.0 structure that make this mannequin such a major development: its ModernBERT basis, its knowledge growth, and smarter method to pretraining.

SecureBERT 2.0 is powered by ModernBERT, a next-generation transformer designed for long-document processing. Prolonged consideration mechanisms and hierarchical encoding enable the mannequin to seize each fine-grained syntax and high-level construction—essential for analyzing lengthy, multi-section safety reviews.

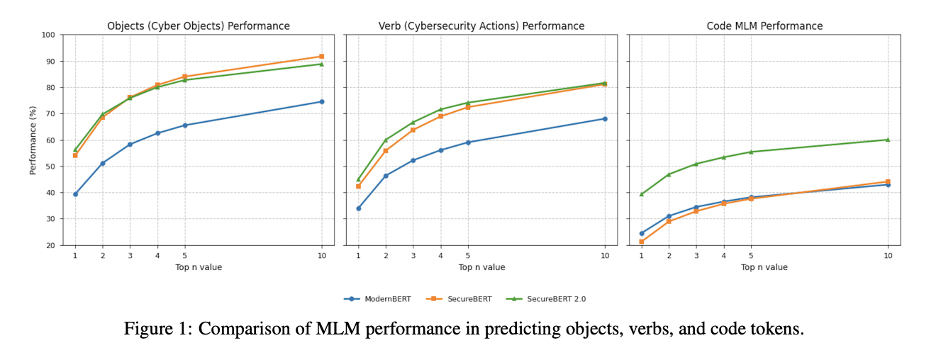

The mannequin is educated on 13 instances extra knowledge than the unique SecureBERT with a brand new corpus that features curated safety articles and technical blogs, filtered cybersecurity knowledge, code vulnerability repositories, and incident narratives. In complete, this dataset covers 13 billion textual content tokens and 53 million code tokens.

Lastly, a microannealing pretraining curriculum progressively transitions from curated to real-world knowledge, balancing high quality and variety. Focused masking teaches the mannequin to foretell essential safety actions and entities like “bypass,” “encrypt,” or “CVE,” strengthening area illustration.

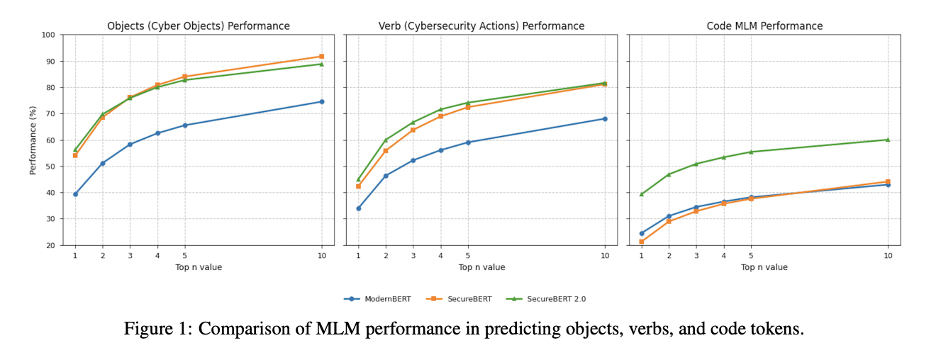

The efficiency of SecureBERT 2.0 is a marked enchancment over its predecessor and different evaluated fashions throughout benchmarks; the main points may be present in full analysis paper.

Trying forward: AI for safety at Cisco

SecureBERT 2.0 demonstrates what’s doable when structure and knowledge are purpose-built for cybersecurity. It joins different fashions, just like the generative Basis-Sec-8B from Cisco’s Basis AI workforce, as a part of Cisco’s continued dedication to making use of AI responsibly inside the area of cybersecurity.

We’re excited to share this mannequin with the world, to see a number of the modern methods it is going to be embraced by the safety group, and to proceed exploring potential usages for taxonomy creation, data graph technology, and different cutting-edge purposes.

You may get began with the SecureBERT 2.0 mannequin on HuggingFace and GitHub in the present day, and dig into our analysis paper for extra element and efficiency benchmarking.

The way forward for cybersecurity AI is securely clever.