In at present’s quickly evolving AI panorama, organizations face a vital problem: harness the transformative energy of generative AI whereas sustaining strong safety and compliance requirements. As enterprises deploy more and more refined GenAI purposes, the necessity for complete safety throughout your entire AI lifecycle has by no means been extra pressing.

As we speak, Cisco is happy to announce a local integration of Cisco AI Protection runtime guardrails with NVIDIA NeMo Guardrails, part of NVIDIA Enterprise software program, bringing collectively two highly effective options to maximise cybersecurity for enterprise AI deployments.

Why Guardrails Matter: The Vital First Line of Protection

Generative AI purposes are essentially totally different from conventional software program. They’re dynamic, probabilistic, and might produce sudden outputs based mostly on consumer interactions. With out correct safeguards, GenAI purposes can generate dangerous, biased, or inappropriate content material, leak delicate info by means of immediate injection assaults, hallucinate details, deviate from meant use circumstances, or violate regulatory compliance necessities.

Runtime guardrails function the important security mechanisms that monitor and management AI conduct in real-time. Consider them as clever visitors controllers that guarantee your AI purposes keep inside secure, compliant boundaries whereas sustaining efficiency and consumer expertise. As organizations transfer from AI experimentation to manufacturing deployments, these guardrails have change into non-negotiable elements of any accountable AI technique.

Guardrails are solely as efficient as their underlying detection fashions and the frequency of updates made to seize the most recent risk intelligence. Enterprises shouldn’t depend on the built-in guardrails created by mannequin builders, as they’re totally different for every mannequin, largely optimized for efficiency over safety, and alignment is well damaged when adjustments to the mannequin are made. Enterprise guardrails, similar to these by Cisco AI Protection and NVIDIA NeMo, present a standard layer of safety throughout fashions, permitting AI groups to focus totally on growth.

NVIDIA NeMo Guardrails: A Main Open-Supply Toolkit

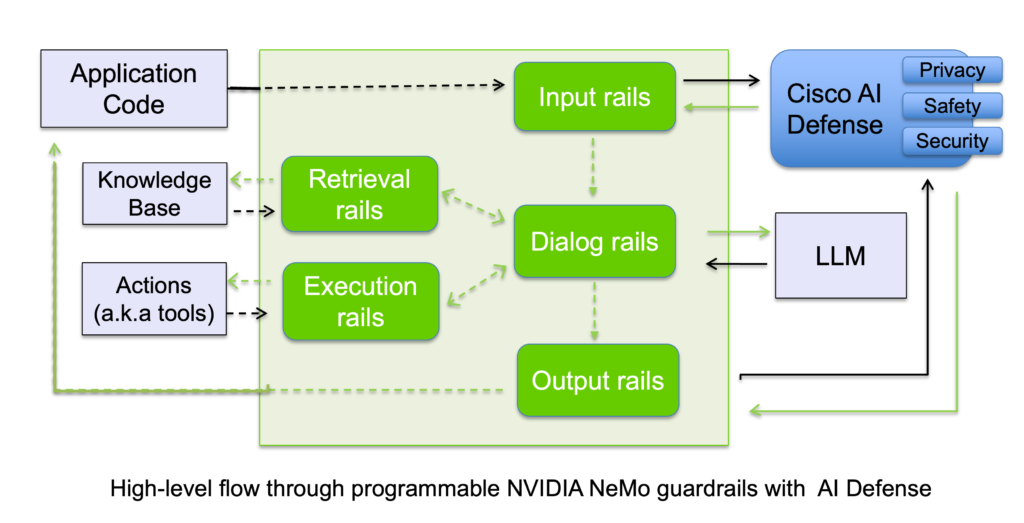

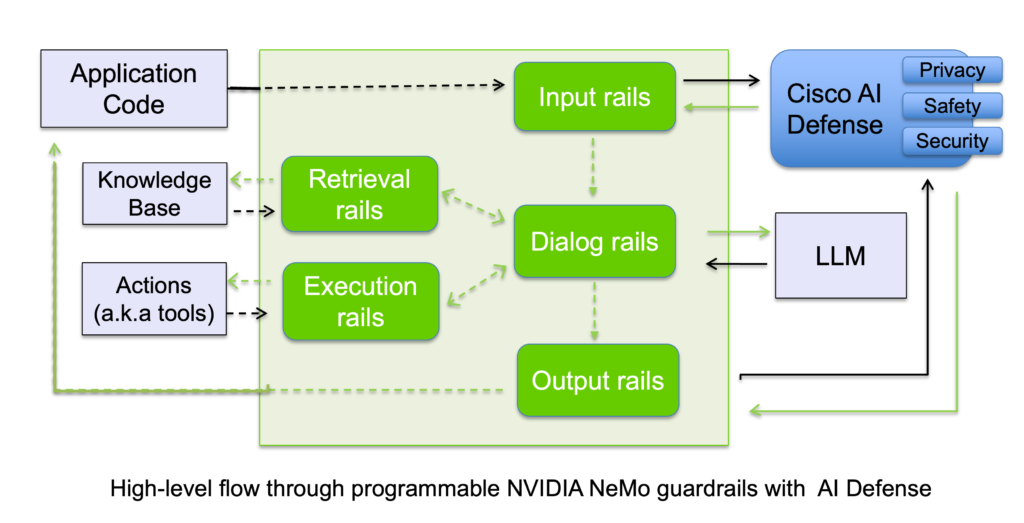

NVIDIA NeMo Guardrails has emerged as a number one open-source framework for constructing programmable guardrails for generative AI purposes. This highly effective toolkit permits builders to outline enter and output boundaries for LLM interactions, implement topical guardrails to maintain conversations on monitor, implement fact-checking and hallucination prevention, and management dialogue move and consumer interplay patterns. As a framework-level answer, NeMo Guardrails offers the structural basis for AI security, giving builders the pliability to outline guidelines and insurance policies tailor-made to their particular use circumstances.

The framework’s widespread adoption throughout the trade displays its strong structure and developer-friendly method. Organizations recognize the flexibility to create customized guardrails that align with their distinctive enterprise necessities whereas leveraging NVIDIA AI infrastructure and acceleration.

Cisco AI Protection: A Complete AI Safety Answer

Runtime guardrails, whereas important, are only one piece of the AI safety puzzle. Cisco AI Protection takes a holistic method to AI safety, defending organizations throughout your entire AI lifecycle from growth by means of manufacturing.

AI Protection makes use of a three-step framework to guard towards AI security, safety and privateness dangers:

- Discovery: mechanically stock AI belongings together with fashions, brokers, data bases, and vector shops throughout your distributed cloud environments.

- Detection: uncover mannequin and software vulnerabilities, together with provide chain dangers and susceptibility to jailbreaks, unsafe responses, and extra.

- Safety: defend runtime purposes with proprietary security, safety, and privateness guardrails, up to date with the most recent risk intelligence.

The safety journey doesn’t finish at deployment. Cisco AI Protection offers steady validation by means of ongoing testing to establish new vulnerabilities in fashions and purposes. As new dangers emerge, further guardrails might be launched to deal with these or fashions might be swapped. This ensures that deployed fashions keep their safety posture over time and proceed to satisfy inside and exterior requirements.

Somewhat than leaving safety implementation to particular person software groups, organizations can implement enterprise-wide runtime controls that align AI conduct with company safety and compliance necessities. By its integration with NVIDIA NeMo Guardrails, Cisco AI Protection makes these controls seamlessly accessible inside developer workflows, embedding safety as a local a part of the AI growth lifecycle. This steady validation and centralized safety mannequin ensures deployed fashions and purposes keep a powerful safety posture over time, whereas vulnerability reviews translate findings into clear insights mapped to trade and regulatory requirements.

Higher Collectively: Boosting Cybersecurity Defenses with Cisco Accelerated by NVIDIA

The native integration of Cisco AI Protection with NVIDIA NeMo Guardrails delivers highly effective cybersecurity for enterprise AI deployments. Somewhat than counting on a single layer of safety, this integration offers builders the pliability to mix the best guardrails for every facet of their purposes—whether or not targeted on security, safety, privateness, or conversational move and matter management.

By bringing collectively NVIDIA NeMo Guardrails’ open-source framework for outlining and imposing conversational and contextual boundaries with Cisco AI Protection’s enterprise-grade runtime guardrails for safeguarding knowledge, detecting threats, and sustaining compliance, organizations achieve a modular and interoperable structure for safeguarding AI in manufacturing.

This collaboration permits builders to combine and match guardrails throughout domains, making certain that AI methods behave responsibly, securely, and persistently—with out sacrificing efficiency or agility. NeMo Guardrails offers the inspiration for structured, customizable controls inside AI workflows, whereas Cisco AI Protection provides repeatedly up to date runtime safety powered by real-time risk intelligence.

Collectively, they allow coordinated guardrail layers that reach throughout the AI lifecycle—from how purposes handle delicate info to how they work together with customers—making a unified and adaptable protection technique. With this native integration, enterprises can innovate quicker whereas sustaining confidence that their AI methods are protected by the best safeguards for each stage of operation.

Cisco Safe AI Manufacturing unit with NVIDIA

Understanding that each group has distinctive infrastructure necessities and safety insurance policies, Cisco and NVIDIA have partnered to supply a validated reference structure to securely energy AI workloads in a buyer’s setting. We provide two deployment choices for the info aircraft: cloud-based or on-premises with Cisco AI PODs.

As we speak, we’re saying orderability of Cisco AI Protection on AI PODs with our knowledge aircraft deployed on-premises. This will also be deployed alongside NVIDIA NeMo Guardrails. Which means firms dealing with strict knowledge sovereignty necessities, compliance mandates, or operational wants can obtain AI software safety for on-premises deployments.

The Path Ahead: Safe AI Innovation

As organizations speed up their AI transformation journeys, safety can’t be an afterthought. The native integration of Cisco AI Protection with NVIDIA NeMo Guardrails, delivered by means of Cisco Safe AI Manufacturing unit, represents a brand new customary for enterprise AI safety—one which doesn’t power you to decide on between innovation and safety.

With this highly effective mixture, you’ll be able to deploy GenAI purposes with confidence, figuring out that a number of layers of protection are working in live performance to guard your group. You may meet essentially the most stringent safety and compliance necessities with out sacrificing efficiency or consumer expertise. You keep the pliability to evolve your infrastructure as your wants change and as AI know-how advances. Maybe most significantly, you leverage the mixed experience of two AI trade leaders who’re each dedicated to creating AI secure, safe, and accessible for enterprises.